TEEs From First Principles: SGX, SEV, and Everything In Between

Edits by Rahul Saxena (@saxenism)

Bluethroat Labs publishes foundational content like this because TEE security requires understanding the stack TEEs are built on. This is that foundation.

Note on images: All diagrams in this article are AI-generated and have been reviewed and curated by a human. They are accurate to the best of our knowledge, but AI-generated visuals can carry subtle errors. If you spot something wrong, please flag it. We genuinely welcome the correction.

A ground-up explanation of the hardware technology securing the most sensitive operations in modern computing.

Trusted Execution Environments (TEEs) are one of the most important and least understood pieces of infrastructure in modern computing. They appear in your smartphone, in cloud servers, in blockchain protocols, and increasingly in AI inference pipelines. Yet most developers who rely on TEE-based systems have only a surface-level understanding of what a TEE actually is and how it works.

This article builds that understanding from first principles, starting with the core problem TEEs solve, then going deep into the two most widely deployed implementations: Intel SGX and AMD SEV.

The Core Problem TEEs Solve

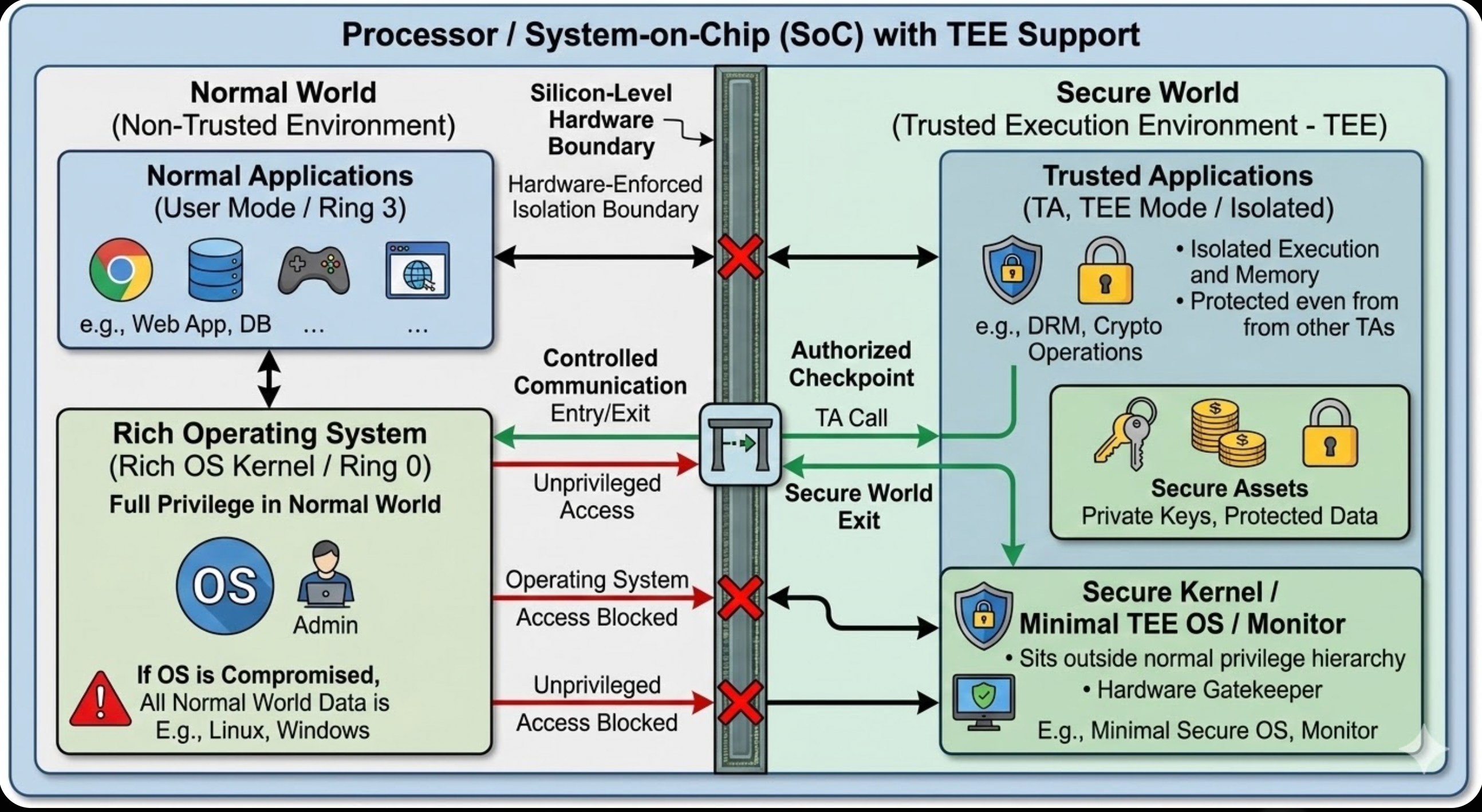

Consider a standard computer. The operating system (OS) is the most privileged piece of software on the machine. It manages memory, schedules processes, controls hardware access, and can inspect or modify any application running on top of it. This is by design, because the OS needs these capabilities to function.

But this creates a problem. If the OS is compromised, or if the machine is physically accessed by an adversary, all the sensitive data on that machine is potentially exposed. Private keys, passwords, transaction data, proprietary algorithms: none of it is safe from a sufficiently privileged attacker.

A TEE addresses this problem not through software controls, which an attacker can bypass by compromising the OS, but through hardware enforcement. The processor itself creates an isolated execution environment whose contents cannot be accessed by anything outside it, including the operating system, the hypervisor, and anyone with physical access to the machine.

The key insight is that this isolation is not a software abstraction. It is enforced at the silicon level.

A Mental Model

Think of your computer as a bank vault. Inside the vault, you keep sensitive assets: private keys, credentials, transaction data. The vault manager (the operating system) has access to everything inside and manages all operations. The problem is that the vault manager might be dishonest, or an attacker might break in and compromise them.

A TEE is a safe within the vault. A hardened compartment whose access controls are not managed by the vault manager but are enforced by the physical structure of the safe itself. Even if an attacker controls everything else in the vault, the contents of the safe remain inaccessible.

Critically, this analogy extends to the hardware level. Modern processors with TEE support have physically separate circuits: one set for the normal execution environment and another for the trusted environment. The hardware enforces that these two worlds cannot cross into each other without going through an authorised checkpoint.

How the Hardware Makes This Work

Privilege Levels

Modern processors support multiple privilege levels, often referred to as protection rings. The two most relevant are:

- Ring 0 (Kernel Mode): The highest privilege level. The operating system kernel runs here and has unrestricted access to CPU instructions and memory.

- Ring 3 (User Mode): The lowest privilege level. Regular applications run here with severely limited access to hardware.

The CPU acts as a security checkpoint that enforces these levels in hardware. When a user-mode application attempts to execute a privileged instruction (such as writing to memory protection registers), the CPU raises a hardware exception that only the kernel can handle. Software cannot bypass this; it is enforced at the transistor level.

TEEs extend this model by introducing an additional execution context that sits outside the normal privilege hierarchy entirely. Code running inside a TEE is isolated from both Ring 0 and Ring 3. Even the OS kernel cannot inspect or modify it.

Memory Management and Virtual Addressing

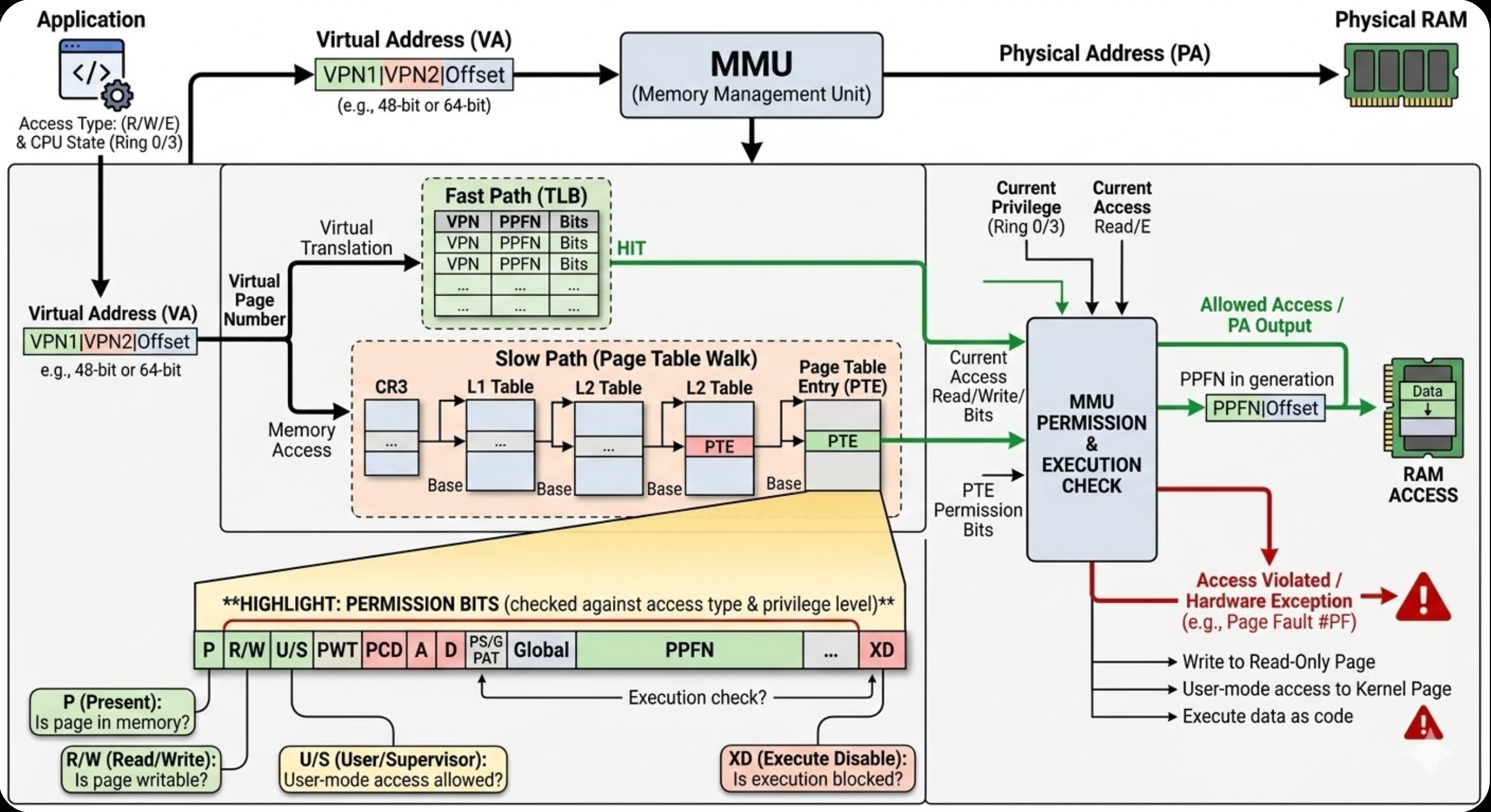

To understand how TEEs isolate memory, it helps to understand how modern processors manage memory access in general.

Every application operates on virtual addresses, which are what the application believes to be its memory locations. These virtual addresses are translated to physical addresses (actual RAM locations) by a hardware component called the Memory Management Unit (MMU). The MMU performs this translation using page tables, which are lookup tables stored in memory that map virtual addresses to physical addresses.

Each entry in a page table also carries permission bits: readable, writable, executable. When an application attempts to access memory, the MMU checks these permissions against the current privilege level. An attempt to write to a read-only page from user mode triggers a hardware exception.

The MMU uses a high-speed cache called the Translation Lookaside Buffer (TLB) to cache recent virtual-to-physical translations, avoiding the performance cost of a full page table lookup on every memory access.

TEEs leverage and extend this memory management architecture to enforce that secure-world memory cannot be accessed from normal-world code, regardless of privilege level.

Cache Architecture and Isolation

Modern CPUs have multiple levels of cache: L1 (smallest, fastest), L2, and L3 (largest, slowest). When a TEE is in use, the processor typically maintains separate cache lines for secure-world code and normal-world code.

When switching between the normal world and the secure world, the CPU flushes cache entries belonging to the world being exited. This prevents timing attacks in which one world infers information about the other by observing cache access patterns rather than directly reading protected memory.

Trusted Applications

Code that runs inside a TEE is called a Trusted Application (TA). Trusted Applications have several properties enforced by the hardware:

Authentication: Only code signed by a trusted authority can be loaded into the TEE. The processor verifies cryptographic signatures before executing any code in the secure world.

Isolation from each other: Multiple Trusted Applications running inside a TEE cannot access each other's memory. TA1 cannot read TA2's data, and neither can the OS access either of their data.

Direct hardware access: Trusted Applications can access cryptographic accelerators and security features that normal applications cannot reach, without going through the OS.

Remote Attestation

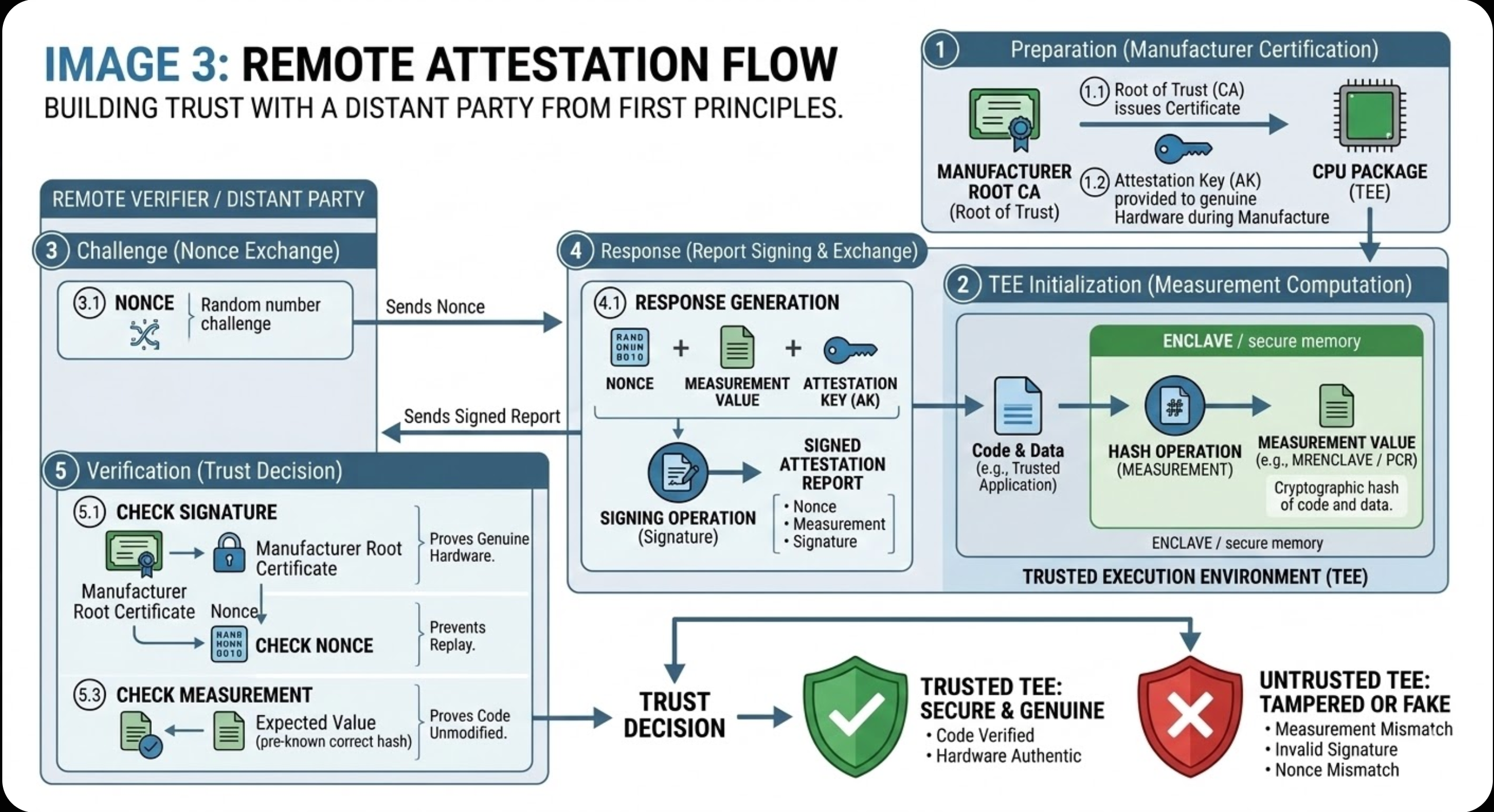

One of the most important capabilities of a TEE is remote attestation: the ability to prove to a remote party that a specific piece of code is running inside a legitimate, uncompromised TEE.

Without attestation, a TEE provides local security but no way to verify that security remotely. Attestation closes this gap.

Measurement: When a TEE is initialised, the processor computes a cryptographic hash of all code and data loaded into it. For Intel SGX, this is called the MRENCLAVE value. For TPM-based systems, measurements are stored in Platform Configuration Registers (PCRs).

Signing: The measurement is signed by an attestation key certified by the hardware manufacturer. For Intel SGX, Intel signs attestation reports with their private key. For TPM-based systems, an Attestation Key (AK) is derived from an Endorsement Key (EK) baked into the TPM hardware at manufacture.

Verification: A remote verifier receives the signed attestation report and checks two things: that the signature is valid (proving the measurement came from genuine hardware) and that the measurement matches the expected value (proving the code has not been modified).

Nonce: To prevent replay attacks, the remote verifier sends a nonce (a random number used once) that must be included in the attestation report. A replayed attestation will not contain the correct nonce and will be rejected.

The Major Implementations: Different Approaches to the Same Problem

TEEs are not a single technology. Different chip manufacturers have taken fundamentally different architectural approaches, each with different trade-offs.

Intel SGX: Creates a small, isolated memory region (called an enclave) inside a single application. Only the sensitive parts of your code run inside the enclave, like a bulletproof compartment built into a larger building.

AMD SEV: Protects entire virtual machines. Rather than isolating a portion of an application, AMD encrypts the full memory of a VM so that the cloud provider's hypervisor cannot read it.

ARM TrustZone: Splits the entire processor into two parallel worlds: a Normal World where the regular OS runs, and a Secure World for sensitive operations.

RISC-V Keystone: An open-source, customisable approach where the TEE can be tailored to specific security requirements.

The fundamental trade-off across all of these is granularity versus flexibility. Fine-grained protection like SGX protects only what you designate as sensitive, but requires significant code changes and has memory constraints. Coarse-grained protection like AMD SEV protects entire VMs with minimal code changes, but requires trusting more of the software stack within the VM.

Intel SGX: Application-Level Enclaves

The Core Idea

SGX, introduced by Intel in 2015, allows individual applications running at Ring 3 (user mode) to create isolated memory regions called enclaves. This was a significant architectural shift: traditionally, only the kernel (Ring 0) could create isolated memory regions. SGX pushed this capability down to the application level.

An enclave is a contiguous region in an application's virtual address space that is protected by the processor itself. The OS, the hypervisor, and other applications are physically prevented from reading or modifying enclave contents, regardless of their privilege level.

The Enclave Lifecycle

Creation: The application uses special CPU instructions (ECREATE, EADD, EINIT) to request enclave creation. The CPU creates the enclave and begins tracking its pages.

Loading: Sensitive code and data are loaded into the enclave. As each page is loaded, the CPU computes a running cryptographic measurement of everything entering the enclave.

Initialisation: The enclave is sealed with EINIT. The CPU locks the enclave, no further modifications are permitted, and finalises the cryptographic measurement (MRENCLAVE), which uniquely identifies the enclave's exact code and data.

Execution: The application calls into the enclave using EENTER. The CPU switches into a protected execution mode. Code outside the enclave cannot observe register state or memory contents during enclave execution.

Exit: EEXIT returns control to the normal application. The enclave remains in memory and can be re-entered.

Memory Architecture: The Enclave Page Cache

SGX allocates a special encrypted region of physical memory called the Enclave Page Cache (EPC) for storing enclave pages. On first-generation SGX hardware, the EPC is limited to 128 MB. Intel's later Ice Lake generation expanded this significantly, but the 128 MB constraint is important to understand when evaluating first-gen SGX deployments.

Because enclaves often need more memory than the EPC can hold, SGX implements paging: when an enclave accesses a page not currently in the EPC, that page must be loaded from normal memory. Before eviction, the page is encrypted and integrity-protected by the CPU. When loaded back, the CPU verifies its integrity before the enclave can access it. This paging is expensive at approximately 40,000 CPU cycles per page swap, which makes memory-intensive SGX applications significantly slower than their non-enclave equivalents.

Technical Deep Dive: Memory Isolation

EPCM (Enclave Page Cache Map): The CPU maintains a dedicated hardware data structure that tracks every page in every enclave. Each entry records which enclave owns the page, the page's virtual address, access permissions, and page type. Any access violation triggers a hardware fault that the OS cannot override.

Memory Encryption Engine (MEE): Intel's MEE encrypts enclave pages using AES-CTR mode with integrity verification:

- Encryption: When enclave data leaves the CPU package, it is encrypted with AES-128 using a key derived from a CPU-internal root key that is never exposed to software.

- Integrity Tree: The MEE maintains a Merkle tree of integrity checksums. Each memory block carries a MAC (Message Authentication Code) that detects any tampering.

- Anti-replay: Version counters prevent an attacker from swapping in old versions of encrypted pages.

When to Use SGX

SGX is well suited to small, security-critical operations such as cryptographic key management, password validation, and signing, particularly in environments where the OS or cloud provider cannot be fully trusted. It is not well suited to large-scale applications requiring gigabytes of secure memory, or to legacy codebases that cannot be refactored to separate sensitive from non-sensitive logic.

AMD SEV: VM-Level Encryption

AMD's Physical Architecture: Chiplets

To understand AMD SEV, it helps to first understand how AMD builds its processors, because the security architecture is deeply integrated with the physical design.

Starting with their Zen architecture in 2017, AMD moved to building multiple small chips called chiplets, connected together on a package. AMD processors consist of two types of chiplets:

CCDs (Core Complex Dies): These contain the actual CPU cores. Each CCD holds 8 CPU cores organised into groups of 4 called CCXs (Core Complexes), each with their own shared L3 cache.

IOD (I/O Die): The central hub containing memory controllers, PCIe lanes, and the pathways between CCDs. The IOD is where memory encryption happens.

The components are connected by AMD's proprietary high-speed interconnect called Infinity Fabric. A critical observation for security: data is only encrypted outside the processor package, on the memory bus between the CPU and DRAM. Inside the chip, data is in plaintext but tagged with an identity marker for isolation.

The AMD Secure Processor

At the heart of AMD's security model is the AMD Secure Processor (AMD-SP): a physical ARM Cortex-A5 CPU core integrated directly into the processor die, with its own dedicated RAM and storage, completely separate from the main CPU cores.

The AMD-SP is the security authority for the entire processor package. It generates encryption keys using a hardware random number generator, manages which virtual machine gets which key, verifies firmware integrity during boot, and handles remote attestation. The main CPU cores never see encryption keys in the clear. The AMD-SP generates keys, stores them in dedicated hardware registers inside the memory controllers, and those keys never leave the silicon package unencrypted.

Memory Encryption: From SME to SEV-SNP

AMD's memory encryption architecture has four layers of increasing security, each generation closing a gap left open by the previous one.

SME (Secure Memory Encryption) is the foundation. It encrypts all system memory using a single key, transparently. When the CPU writes data to memory, it passes through an AES encryption engine inside the memory controller. The key is generated by the AMD-SP on every system reset and never exposed to any software. SME protects against physical memory attacks but does not isolate VMs from each other.

SEV (Secure Encrypted Virtualization) extends SME by assigning each VM its own unique encryption key, using ASID (Address Space ID) tags to track ownership. Each VM is assigned an ASID number. Data inside the CPU is tagged with its owner's ASID, and the hardware enforces that only code running with a given ASID can access data tagged with that ASID. When data leaves the CPU to DRAM, it is encrypted with the key for that ASID. A hypervisor attempting to read a VM's memory sees only encrypted data it cannot decrypt.

SEV-ES (Encrypted State) closes a gap in basic SEV: when a VM exits to the hypervisor, CPU registers were previously exposed. A malicious hypervisor could trigger frequent VM exits and reconstruct the VM's execution state from register values. SEV-ES encrypts all CPU register state during VM exits into a special encrypted memory area called the VMSA (Virtual Machine Save Area), encrypted with the VM's key. Registers are zeroed before the hypervisor sees them and restored from the VMSA before the VM resumes. After SEV-ES, the hypervisor can no longer observe register values, the instruction pointer, stack contents, or program control flow.

SEV-SNP (Secure Nested Paging) is the most recent and most important generation, and the one used in serious production deployments today. SEV-ES still had a remaining gap: a malicious hypervisor could remap or swap the physical memory pages assigned to a VM without the VM's knowledge, silently feeding it attacker-controlled data. SEV-SNP closes this by adding hardware-enforced memory integrity. A Reverse Map Table (RMP) tracked by the CPU records the ownership of every physical memory page. The VM can verify that each page it uses has not been remapped or replaced by the hypervisor. Any unauthorised remapping triggers a hardware fault before the VM ever sees the corrupted data. SEV-SNP is what makes AMD's TEE model viable for high-security cloud deployments.

Remote Attestation in AMD SEV

AMD's attestation model works similarly to the general model described earlier in this article, with AMD-specific components.

When an SEV-enabled VM is launched, the AMD-SP computes a launch measurement: a SHA-256 hash of the VM's initial memory contents and configuration. This measurement is signed using the Platform Endorsement Key (PEK), which is itself certified by AMD, creating a verifiable chain of trust back to AMD's root of trust.

The attestation flow:

- The VM owner prepares a secret (such as a disk encryption key) they will only release after attestation succeeds.

- The cloud provider launches the VM. The AMD-SP measures and signs the launch state.

- The VM owner sends a nonce. The VM requests a signed attestation report from the AMD-SP including the nonce, measurement, and configuration.

- The VM owner verifies the signature, the AMD certificate chain, the launch measurement, and the nonce.

- If all checks pass, the VM owner sends the secret encrypted under a session key shared with the VM.

If the hypervisor tampered with the VM's code before launch, the launch measurement would not match, and the VM owner would withhold the secret.

SGX vs SEV: Two Answers to the Same Question

Intel SGX and AMD SEV represent fundamentally different answers to the question of how to protect sensitive computation from a compromised or untrusted privileged layer.

SGX answers at the application level: isolate only the sensitive parts, with fine-grained control and significant engineering overhead. SEV answers at the VM level: encrypt everything and prevent the hypervisor from seeing any of it, with minimal code changes but a broader trusted computing base within the VM.

Neither approach is strictly superior. The right choice depends on your threat model, your application architecture, and your tolerance for engineering complexity.

ARM TrustZone and RISC-V Keystone take different approaches again, splitting the processor at the hardware level rather than at the application or VM level. Those architectures will be covered in a follow-up article.

Further Reading

For a comprehensive reference on TEE security as it applies to web3 protocols, see the Bluethroat Labs TEE Security Handbook.

Abhimanyu Gupta is a security consultant with Bluethroat Labs.